Ethical Hacking with AI: Red Teaming with Automated Tools

Explore how AI is revolutionizing ethical hacking and Red Teaming. Discover the strategic benefits and governance requirements of automated adversarial emulation.

Introduction: The Evolution of the Penetration Test

For decades, the standard method for validating an organization’s security posture has been the annual penetration test. A team of ethical hackers is hired to manually probe the perimeter, chain together exploits, and produce a PDF report detailing the vulnerabilities. However, in the era of Agile development, CI/CD pipelines, and cloud-native microservices, an infrastructure changes daily. A point-in-time assessment is often obsolete the moment the report is delivered.

To match the speed of modern development and the sophistication of threat actors, IT leaders are transforming how they validate security. The industry is moving from manual, periodic penetration testing to continuous, AI-driven Red Teaming. By weaponizing Large Language Models (LLMs) and autonomous agents for ethical hacking, organizations can continuously stress-test their defenses, mimicking the exact tactics, techniques, and procedures (TTPs) of advanced persistent threats (APTs) at scale.

1. The Automation of Reconnaissance and Weaponization

The traditional Cyber Kill Chain begins with reconnaissance—a highly tedious phase where hackers scour public records, scan ports, and map the target’s attack surface. AI dramatically accelerates this phase, turning days of manual enumeration into minutes of automated analysis.

- Algorithmic OSINT: Ethical hackers now deploy AI agents to autonomously scrape GitHub repositories, dark web forums, and LinkedIn profiles. These models use Natural Language Processing (NLP) to parse unstructured data, identifying leaked credentials, exposed API endpoints, and organizational hierarchies that can be targeted for spear-phishing.

- Intelligent Vulnerability Mapping: Instead of relying on rigid, signature-based vulnerability scanners (like Nessus or Qualys) that generate thousands of false positives, AI-driven red team tools ingest the scan data and correlate it with external threat intelligence. The AI identifies which specific vulnerabilities are actively being exploited in the wild and maps out the most probable attack paths into the network.

2. Dynamic Exploit Generation and Evasion

Once a vulnerability is found, the attacker must exploit it. Historically, ethical hackers relied on static exploit databases (like Exploit-DB or Metasploit). If a target system had a slightly different operating system version or a custom Web Application Firewall (WAF), the static exploit would fail, requiring the hacker to manually rewrite the payload. Techniques like adversarial examples are also being repurposed for offensive red team exercises.

Generative AI fundamentally changes this dynamic.

- Payload Mutation: Red teams use LLMs to automatically generate and mutate exploit code on the fly. If a defensive system blocks an initial prompt injection or SQL injection payload, the AI can instantly generate fifty obfuscated variations—using different encodings, polyglots, and syntax structures—until one successfully bypasses the WAF.

- Bypassing EDR: AI is also being used to automate the evasion of Endpoint Detection and Response (EDR) systems. Machine learning models analyze the behavioral signatures that EDRs look for and automatically rewrite malware wrappers to randomize memory allocation and API calls, ensuring the red team’s payload remains completely undetected during the exercise.

3. The Rise of CART (Continuous Automated Red Teaming)

The ultimate strategic goal of integrating AI into ethical hacking is the realization of Continuous Automated Red Teaming (CART).

CART platforms utilize autonomous AI agents that operate 24/7 inside the corporate network.

- Adversarial Emulation: These agents are programmed to safely emulate the behavior of known ransomware groups or nation-state actors. Leveraging fuzzing at scale, they constantly attempt to move laterally, escalate privileges, and exfiltrate dummy data.

- Validating the Blue Team: For the Chief Information Security Officer (CISO), CART provides empirical evidence of the organization’s defensive readiness. If an IT engineer accidentally misconfigures a Kubernetes cluster on a Tuesday, the CART agent will autonomously discover and exploit it on Wednesday, triggering an alert to the Security Operations Center (SOC) before a real attacker can find it.

4. Governance and the Risks of Autonomous Hacking

While the strategic benefits of AI-driven red teaming are immense, deploying autonomous hacking agents introduces severe operational risks. You are, by definition, releasing a piece of self-thinking malware into your own enterprise environment.

For technology managers, strict governance and “rules of engagement” are mandatory:

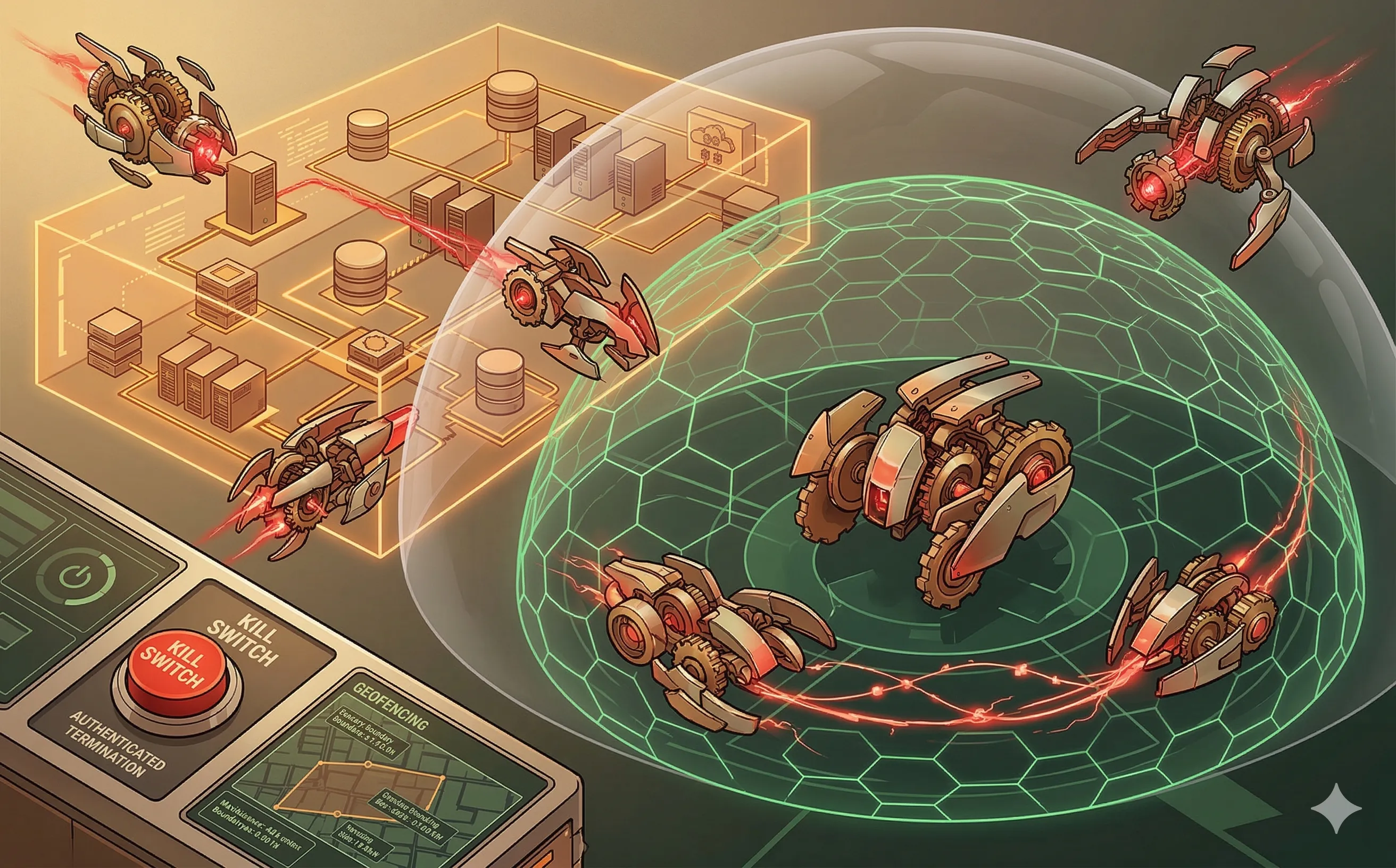

- Scope and Boundary Control: Autonomous agents must be strictly geofenced. A misconfigured AI could easily wander out of the designated testing environment and accidentally launch a denial-of-service attack against a critical production database, causing a massive business outage.

- The “Kill Switch”: Any AI-driven red teaming platform must have a deterministic, heavily authenticated “kill switch.” If the autonomous agent begins acting unpredictably or consuming too many system resources, the security team must be able to instantly terminate all its active processes and network connections.

- Ethical Guardrails: When using LLMs to generate spear-phishing campaigns for employee training, organizations must set strict ethical boundaries. The AI must be restricted from using highly manipulative emotional triggers (like faking a family emergency or a termination notice) that could cause genuine psychological distress to the workforce.

Conclusion

The asymmetry of cybersecurity—where the defender must be right 100% of the time, and the attacker only needs to be right once—is slowly being balanced by AI. By embracing Continuous Automated Red Teaming and AI-driven ethical hacking, IT leaders can move away from compliance-driven checklists and adopt a truly adversarial mindset. However, harnessing this power requires rigorous governance to ensure that the autonomous tools designed to protect the network do not become the ones that bring it down.