GDPR & LLMs: The 'Right to Be Forgotten' in a Neural Network

Explore the technical and legal conflict between the GDPR's Right to Erasure and the probabilistic architecture of Large Language Models, and how enterprise IT can adapt.

Introduction: The Irreversible Memory of AI

In the European Union, data privacy is not just a best practice; it is a fundamental human right encoded into the General Data Protection Regulation (GDPR). One of the most powerful and heavily enforced provisions of the GDPR is Article 17: the “Right to Erasure,” commonly known as the Right to Be Forgotten.

For traditional IT systems, complying with Article 17 is straightforward, albeit sometimes complex to execute. If a user requests their data be deleted, a database administrator executes a DELETE command across the SQL tables, purges the backups, and the data is gone.

However, the rapid adoption of Large Language Models (LLMs) has created a massive collision between legal mandates and computer science. How do you delete a specific person’s data from an AI that has already ingested it, learned from it, and baked it into a billion-parameter neural network? For IT architects and enterprise leaders, solving this paradox is critical to deploying AI legally — especially given the strict mandates of the EU AI Act.

1. The Fundamental Architectural Conflict

To understand the compliance nightmare, we must understand how an LLM stores information. An LLM is not a database; it is a probabilistic engine.

- The Database Paradigm: Traditional databases store discrete facts in rows and columns. Removing a row does not affect the other rows.

- The Neural Network Paradigm: When an LLM is trained on a dataset containing personal data, that text is broken down into tokens. The model learns the statistical relationships between those tokens, adjusting billions of mathematical weights across its neural layers. The personal data ceases to exist as a discrete “file” and becomes an abstracted, mathematical representation distributed across the entire network.

Because the data is distributed contextually, there is no “Control+F” or DELETE function in a trained neural network. You cannot surgically extract one person’s data without fundamentally altering the mathematical weights that dictate the model’s overall intelligence and performance.

2. The Expensive Brute-Force Solution

Currently, if an enterprise trains a proprietary LLM on customer data and a customer invokes their Right to Be Forgotten, the only mathematically guaranteed way to erase them from the model is the brute-force method: Retraining from Scratch.

The organization must:

- Delete the user’s data from the original training corpus.

- Spin up the GPU clusters.

- Retrain the entire foundational model or fine-tuned iteration from zero.

For massive models, this costs millions of dollars and takes weeks of compute time. From a managerial and financial perspective, retraining an enterprise model every time a single user submits a GDPR request is completely unsustainable.

3. Machine Unlearning: The Emerging Frontier

To bridge this gap, AI researchers are pioneering a new subfield of computer science called Machine Unlearning. The goal is to develop algorithms that can tell a neural network to “forget” specific training data without having to retrain from scratch.

- Approximate Unlearning: Techniques are being developed to identify which specific weights were most heavily influenced by the targeted data point and mathematically “reverse” or penalize those weights.

- The Risk of Catastrophic Forgetting: The primary challenge with machine unlearning is that aggressively altering weights to make the model forget one fact often causes a ripple effect, causing the model to spontaneously forget completely unrelated, crucial information or degrading its overall language capabilities.

While promising, true Machine Unlearning is still largely experimental and has not yet reached the maturity required for guaranteed legal compliance at an enterprise scale.

4. Pragmatic Architectures for Enterprise Compliance

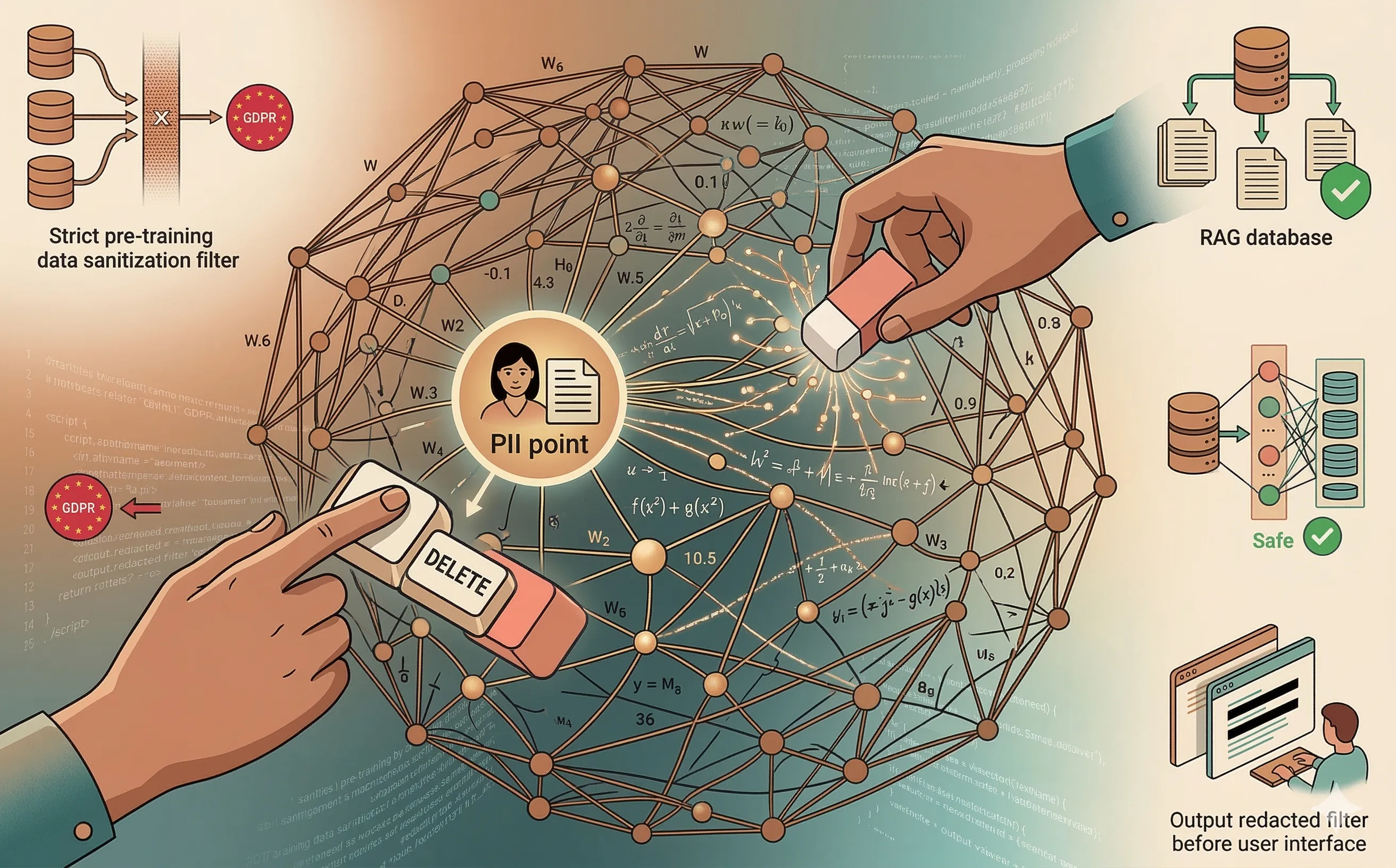

Because the underlying technology of “unlearning” is not yet mature, IT architects must rely on system-level workarounds to maintain GDPR compliance while leveraging AI.

- Strict Data Sanitization Before Training: The most effective defense is shifting left. Enterprise teams must utilize aggressive Data Loss Prevention (DLP) and PII redaction pipelines to scrub all personal data from the corpus before the model ever sees it during the training or fine-tuning phase. This also helps mitigate data poisoning risks by enforcing strict control over what enters the training pipeline.

- Retrieval-Augmented Generation (RAG): Instead of fine-tuning the model on sensitive customer data, organizations are adopting RAG architectures. In a RAG system, the LLM itself contains no personal data. When a user asks a question, the system queries a traditional, secure database for the relevant facts, temporarily feeds those facts into the LLM’s prompt window, and generates an answer. If a user requests deletion, IT simply deletes their record from the traditional database. The LLM immediately loses access to that data, perfectly satisfying Article 17.

- Input and Output Guardrails: Specialized AI firewalls are deployed to actively monitor the model’s output. Even if the model managed to memorize a piece of PII during training, the output filter acts as a fail-safe, intercepting and redacting the personal data before it is ever displayed to an end-user.

Conclusion

The conflict between the GDPR and Generative AI highlights a recurring theme in modern IT: the law expects deterministic compliance from increasingly probabilistic systems. Until the science of Machine Unlearning matures, organizations must architect their AI deployments defensively. Questions of data sovereignty also become paramount when deciding where and how personal data is processed. By heavily utilizing RAG architectures and strict pre-training sanitization, IT leaders can harness the power of LLMs without crossing the formidable boundary of the Right to Be Forgotten.