Green IT & Security: The Energy Cost of Training Defensive Models

Analyze the intersection of Green IT and cybersecurity. Understand the massive carbon footprint of AI-driven security tools and how to build a sustainable DevSecOps architecture.

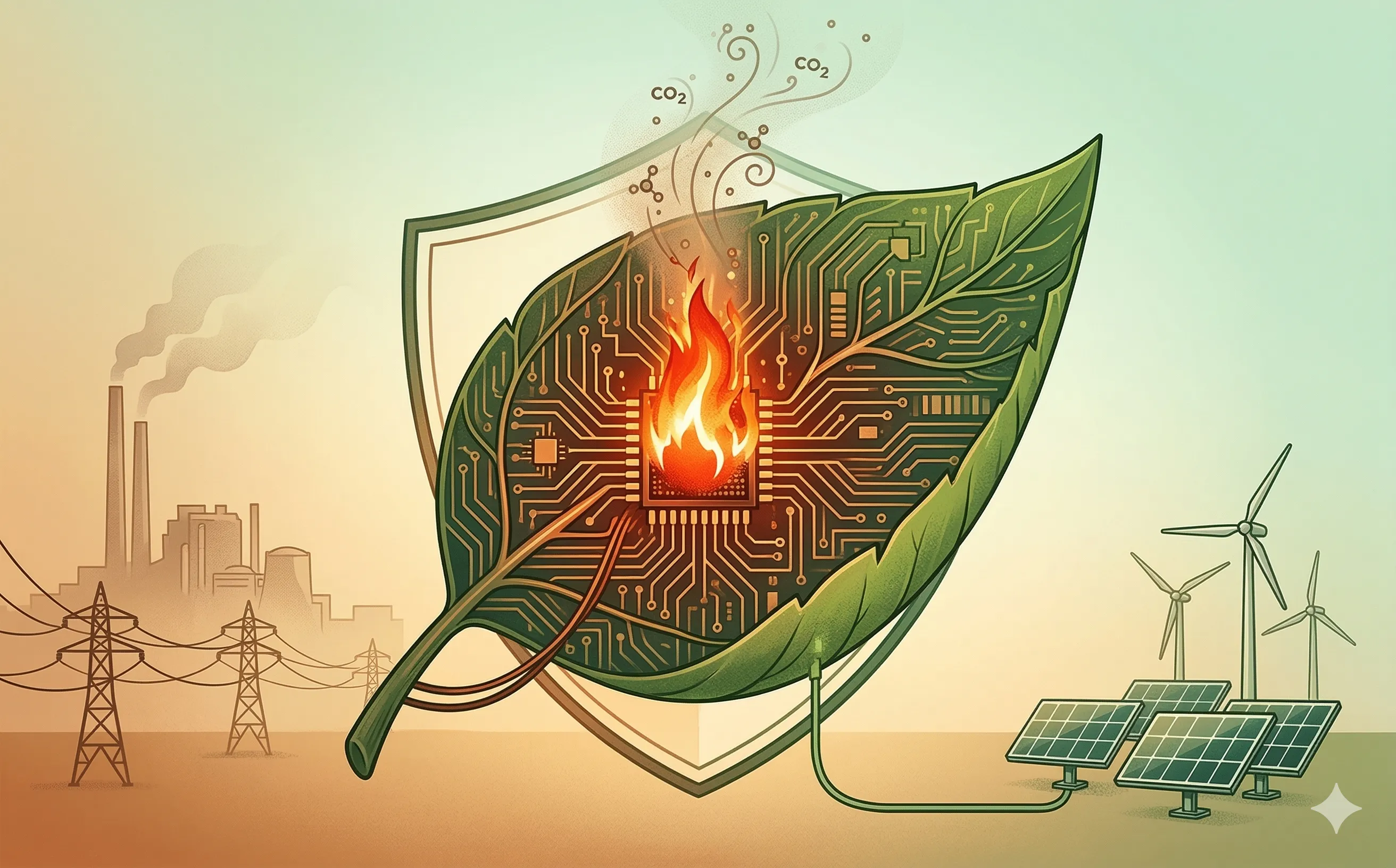

Introduction: The Hidden Cost of AI Defense

Over the past year, we have established that Artificial Intelligence is mandatory for defending the modern enterprise. From autonomous Red Teaming to ML-driven Cloud Security Posture Management, AI is the only tool capable of matching the speed and scale of today’s cyber threats. However, this defensive supremacy comes with a massive, often overlooked external cost: Energy Consumption.

For IT directors and Chief Information Security Officers (CISOs), cybersecurity can no longer be viewed in isolation from the organization’s Corporate Social Responsibility (CSR) and Environmental, Social, and Governance (ESG) goals. Training and running advanced machine learning models require vast data centers, specialized GPUs, and staggering amounts of electricity. As enterprise IT shifts toward “Green IT” initiatives, security leaders face a paradox: How do you protect your infrastructure from devastating cyberattacks without destroying your organization’s carbon reduction targets?

1. The Carbon Footprint of Defensive AI

To understand the scale of the problem, we must distinguish between the two phases of a machine learning model’s lifecycle: Training and Inference.

- The Training Burden: Training a foundational Large Language Model (LLM) or a complex behavioral detection neural network requires thousands of GPUs running at maximum capacity for weeks or months. This process consumes gigawatt-hours of electricity and generates hundreds of tons of CO2 equivalents.

- The Security Inference Tax: While training is highly energy-intensive, it is an infrequent event. For cybersecurity, the true energy drain is inference—the act of querying the model in real-time. If an enterprise deploys an AI-driven Web Application Firewall (WAF), the model must process, analyze, and score every single HTTP request and network packet entering the organization 24/7. Scaling this across a global cloud architecture results in a continuous, massive baseline of energy consumption.

2. Concept Drift and the Continuous Retraining Cycle

Unlike a standard software application that can be compiled once and run for years, defensive AI models degrade over time. This phenomenon is known as Concept Drift.

Adversaries continuously evolve their tactics, techniques, and procedures (TTPs). They develop new malware signatures, novel prompt injection techniques, and varied behavioral patterns. A machine learning model trained to detect the threat landscape of January will be dangerously inaccurate by June.

To maintain efficacy, the SOC (Security Operations Center) must implement a continuous retraining pipeline. The DevSecOps team must constantly feed the model new threat intelligence and retrain its neural weights. This means the massive energy cost of training is not a one-time capital expenditure; it is an ongoing, operational energy tax that permanently increases the IT department’s carbon footprint.

3. Sustainable Architecture: Small Language Models (SLMs)

Reconciling robust cybersecurity with Green IT requires a fundamental architectural shift. The era of throwing massive, billion-parameter LLMs at every minor security problem is ending. IT architects are transitioning to highly optimized, sustainable deployments.

- Right-Sizing the AI: You do not need GPT-4 to determine if a network packet is malformed. Instead of relying on massive general-purpose models, security teams are deploying Small Language Models (SLMs) and specialized, narrow-AI algorithms (like Random Forests or Gradient Boosting). These models are a fraction of the size, require exponentially less compute power to train, and execute inference in milliseconds with minimal energy overhead.

- Edge Computing: As discussed in our IoT Security analysis, moving the AI inference from massive centralized cloud data centers directly to the edge (the endpoint device or local router) drastically reduces the energy consumed by continuous data transmission. It spreads the thermal and electrical load across the network, enabling a more sustainable architecture.

4. The ESG Regulatory Mandate (CSRD)

For IT managers operating in the European Union, balancing security and sustainability is no longer just a moral preference; it is a strict legal requirement.

The Corporate Sustainability Reporting Directive (CSRD) forces large companies to comprehensively disclose their environmental impact, explicitly including their digital carbon footprint (Scope 3 emissions).

- Security as an Emitter: When an organization provisions highly intensive cloud security services, the energy consumed by the cloud provider (AWS, Azure, GCP) counts toward the organization’s own carbon reporting.

- The Procurement Shift: CISOs must now evaluate cybersecurity vendors not just on their detection rates, but on their energy efficiency. Security Request for Proposals (RFPs) increasingly require vendors to provide telemetry on the computational efficiency of their AI models and proof that their data centers run on renewable energy.

Conclusion

The future of enterprise IT management requires balancing existential threats. A catastrophic ransomware attack can bankrupt a company, but ignoring the energy demands of the security stack will inevitably lead to regulatory fines and public ESG failures. By adopting Green IT principles—right-sizing models, pushing inference to the edge, and demanding computational efficiency from vendors—IT leaders can build a defensive architecture that protects both the organization’s data and its environmental commitments.