Insider Threat Detection: Identifying Disgruntled Employees via Behavior

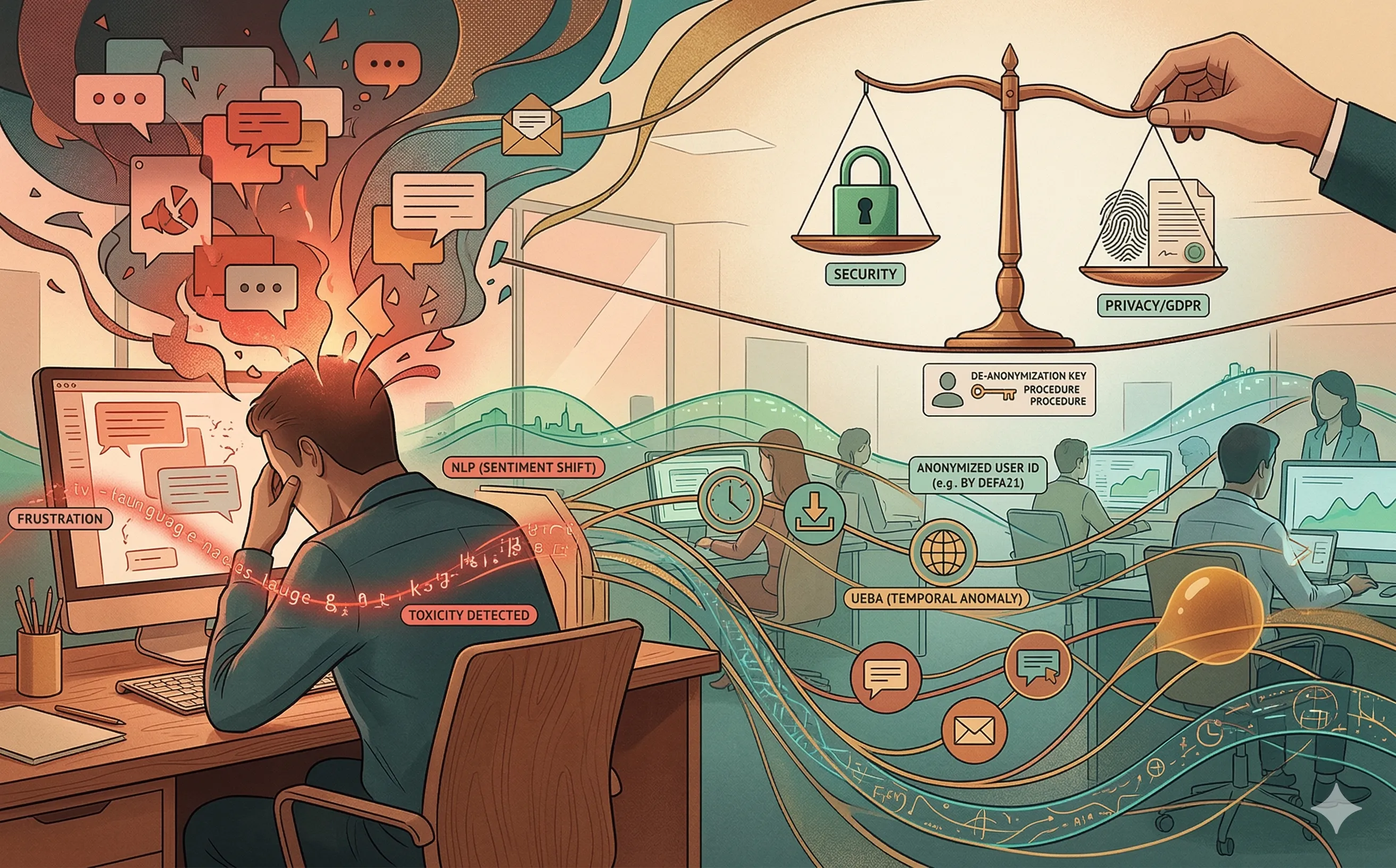

Explore how AI-driven UEBA and sentiment analysis detect malicious insider threats. Navigate the ethical and legal boundaries of monitoring employee behavior.

Introduction: The Threat from Within

When designing a cybersecurity architecture, IT leaders naturally focus on fortifying the perimeter against external threat actors: ransomware gangs, nation-states, and automated botnets. However, statistically, some of the most devastating data breaches and intellectual property thefts originate from inside the building.

Insider threats generally fall into three categories: the compromised account (an attacker stealing credentials), the negligent user (an employee accidentally exposing data), and the malicious insider (a disgruntled employee actively seeking to steal data or sabotage systems). Traditional security tools like Data Loss Prevention (DLP) gateways struggle to catch malicious insiders because these employees already have legitimate, authorized access to the data they are stealing. To detect hostile intent before data leaves the perimeter, organizations are shifting from rule-based monitoring to AI-driven behavioral and psycholinguistic analysis.

1. The Evolution of UEBA (User and Entity Behavior Analytics)

If a senior database administrator suddenly downloads 50GB of customer records at 3:00 AM on a Sunday, a static DLP rule might not flag it if their role technically permits database access.

To bridge this context gap, IT security teams deploy User and Entity Behavior Analytics (UEBA). UEBA relies on unsupervised machine learning to establish a mathematical baseline of “normal” behavior for every individual employee and their corresponding peer groups.

- Temporal and Spatial Anomalies: The AI learns a user’s typical working hours, their geographic login locations, and the specific VPN nodes they use.

- Volume and Velocity: The system tracks the normal volume of data an employee interacts with. If a software engineer who typically clones one or two repositories a week suddenly scripts an automated clone of the entire corporate GitHub organization, the UEBA engine flags a massive deviation from the baseline.

- Peer Group Analysis: The AI compares the user’s behavior not just to their own history, but to their active directory group. If one financial analyst begins accessing legacy M&A (Mergers and Acquisitions) folders that no other analyst has touched in months, the system dynamically elevates their risk score.

2. Sentiment Analysis and Psycholinguistics

While UEBA tracks what an employee is doing, modern AI tools are increasingly being used to analyze how an employee is feeling. By applying Natural Language Processing (NLP) to corporate communications (e.g., Slack, Microsoft Teams, corporate emails), organizations attempt to detect the early warning signs of a disgruntled employee.

- Toxicity and Sentiment Shifts: NLP models can scan internal messaging for sudden spikes in toxic language, expressions of deep frustration, or language indicating organizational detachment (often a precursor to flight risk).

- The “Fraud Triangle” Detection: Criminology utilizes the “Fraud Triangle” (Opportunity, Pressure, Rationalization) to explain white-collar crime. Advanced sentiment models look for linguistic markers of financial pressure or deep-seated resentment against management, flagging users who might be rationalizing upcoming data theft.

3. The Ethical and Legal Minefield of Employee Surveillance

For IT managers and HR leaders, deploying AI to monitor employee sentiment is a legal and ethical tightrope. The line between necessary security monitoring and dystopian workplace surveillance is incredibly thin.

- Data Privacy and the GDPR: In the European Union, the GDPR heavily restricts employee monitoring. Analyzing the sentiment of private employee communications borders on processing psychological data. Employees have a right to privacy even on corporate devices. Furthermore, Article 22 of the GDPR explicitly protects individuals from being subject to decisions based solely on automated processing.

- The “Creepy Factor” and Corporate Culture: If employees discover that an AI is actively reading their Slack messages to gauge their loyalty, it can instantly destroy workplace trust. This hyper-surveillance often backfires, creating the very disgruntlement and toxic culture the AI was deployed to detect. Shadow IT increases as employees move conversations to encrypted, unmonitored personal devices like WhatsApp or Signal to evade the AI.

4. Strategic Governance: The Human-in-the-Loop Model

To harness the security benefits of behavioral AI without violating ethics or labor laws, enterprise governance must mandate a strict Human-in-the-Loop (HITL) architecture.

- Anonymization by Default: The AI should not output a dashboard showing “John Doe is 85% likely to steal data.” Instead, the system must use pseudonymization. It should flag an anonymous user ID to the Security Operations Center (SOC) — ideally through an AI-powered SIEM — based on behavioral metadata, not their name.

- Cross-Departmental Escalation: De-anonymizing an employee and launching an insider threat investigation must never be an automated AI action. It should require a formal, documented “break-glass” procedure involving unanimous sign-off from IT Security, Human Resources, and Legal Counsel.

- Transparent Policies: Governance demands transparency. Organizations must explicitly outline in their Acceptable Use Policies exactly what behavioral and communication data is being analyzed by AI. Security is most effective when it is a shared cultural responsibility, not a covert surveillance operation.

Conclusion

Detecting a disgruntled employee before they exfiltrate critical intellectual property is the holy grail of defensive cybersecurity. AI and UEBA provide the necessary tools to see beyond static permissions and understand human intent. However, technology leaders must exercise profound strategic restraint. By prioritizing anonymization, strict legal compliance, and transparent HR governance, organizations can secure their most valuable assets without treating their own workforce as the enemy. A complementary Zero Trust architecture further ensures that even authorized users only ever access the minimum resources required for their role.