NIST AI Risk Management Framework: A Guide for Organizations

Discover how the NIST AI RMF helps organizations map, measure, and manage AI risks. Learn how to implement this critical framework for trustworthy AI.

Introduction: The Blueprint for Trustworthy AI

As organizations rush to integrate artificial intelligence into their operations, IT leaders face a daunting challenge: how do you manage the risks of a technology that is inherently probabilistic, opaque, and rapidly evolving? While regulations like the EU AI Act provide strict legal boundaries, they often lack the tactical, day-to-day blueprints engineering teams need to actually build secure systems.

Enter the NIST AI Risk Management Framework (AI RMF). Released by the U.S. National Institute of Standards and Technology, the AI RMF is a voluntary, foundational framework designed to help organizations incorporate trustworthiness into the design, development, use, and evaluation of AI products, services, and systems. Much like the renowned NIST Cybersecurity Framework (CSF) became the global gold standard for infosec, the AI RMF is quickly becoming the definitive playbook for enterprise AI governance.

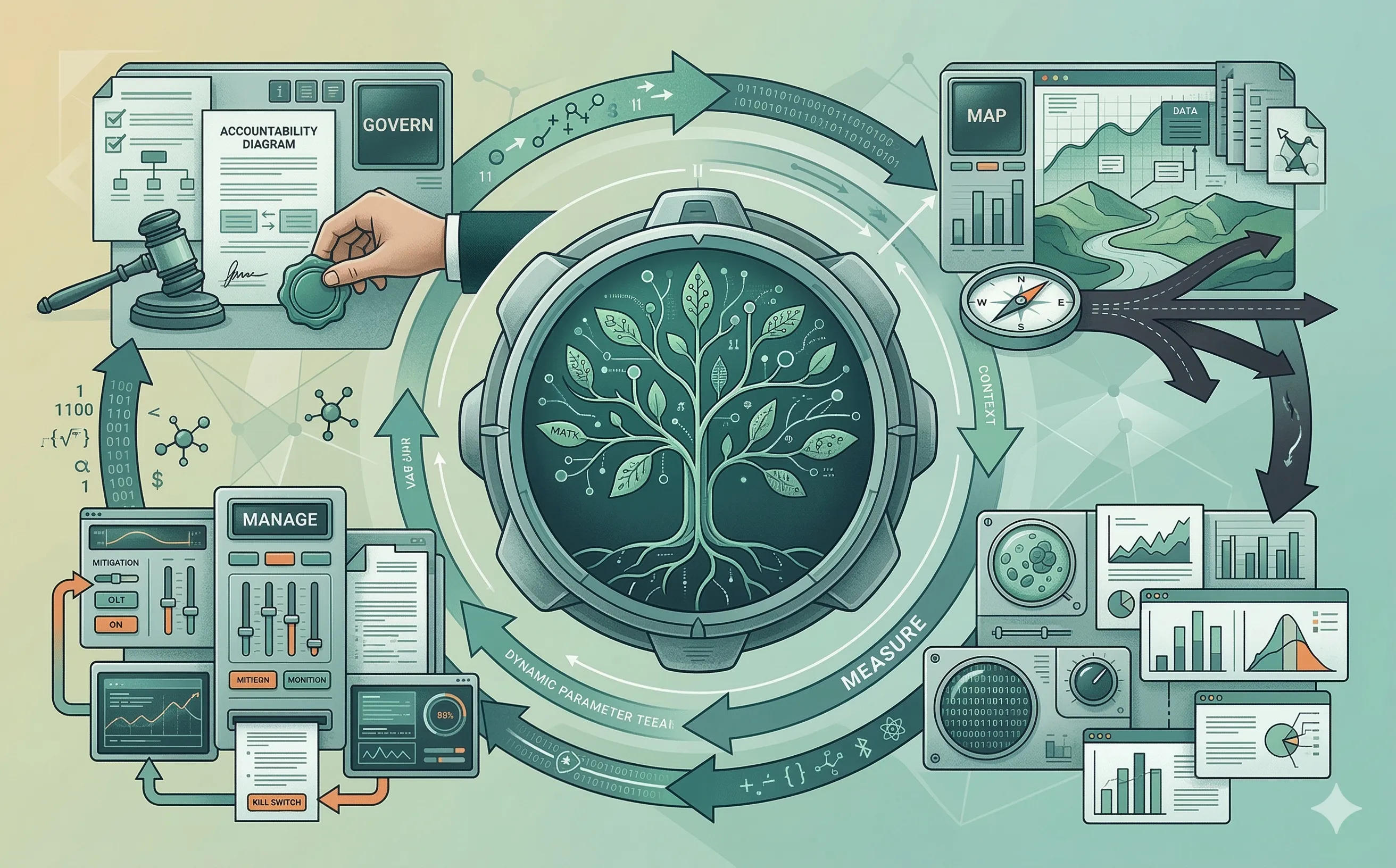

1. The Core Structure: Govern, Map, Measure, Manage

The NIST AI RMF is not a static checklist; it is a continuous, iterative lifecycle designed to adapt to the shifting nature of machine learning models. The framework is divided into four core functions that must operate in tandem.

Unlike traditional software development lifecycles, these functions are not strictly linear. Because AI models experience “concept drift” (their accuracy and behavior change over time as real-world data shifts), organizations must continuously loop through these four pillars to maintain a trustworthy posture.

- Govern: The culture, policies, and overarching accountability structures.

- Map: The context-setting phase where use cases, benefits, and potential harms are identified.

- Measure: The quantitative and qualitative assessment of the AI’s trustworthiness.

- Manage: The active prioritization, mitigation, and monitoring of identified risks.

2. Govern: The Foundation of AI Accountability

In the AI RMF, Govern sits at the center of the framework because without executive accountability and a culture of risk awareness, the other three functions will fail. AI risk cannot be entirely offloaded to the DevSecOps or data science teams; it requires a cross-functional mandate.

To implement the Govern function, organizations must:

- Establish Clear Ownership: Determine exactly who is accountable for the AI system’s outcomes. If an algorithmic HR screening tool exhibits racial bias, who signs off on the mitigation strategy?

- Define Risk Tolerance: Leadership must clearly articulate the organization’s threshold for AI risk. A marketing chatbot hallucinating a product feature carries a vastly different risk profile than an autonomous medical diagnostic tool.

- Foster Cross-Disciplinary Teams: The Govern function requires legal, compliance, ethics, and HR professionals to collaborate directly with machine learning engineers from the inception of the project.

3. Map and Measure: Context and Metrics

You cannot manage what you do not understand. The Map function forces organizations to thoroughly document the context of the AI deployment. This involves cataloging the data sources, understanding the limitations of the foundational model, and explicitly defining the intended (and potential unintended) use cases.

Once the terrain is mapped, the Measure function applies rigorous, objective testing. NIST outlines several characteristics of “Trustworthy AI” that must be measured:

- Validity and Reliability: Does the model actually do what it claims to do under stress?

- Safety and Security: Is the model resilient against adversarial attacks, prompt injection, and data poisoning? Tools like AI-powered SIEM platforms can help continuously monitor these threats in production.

- Fairness and Bias: Are there disparate impacts on protected demographic groups?

- Explainability and Transparency: Can the system’s output be understood and interpreted by a human operator?

4. Manage: Mitigation and Continuous Monitoring

The final core function is Manage. Once risks have been mapped and measured, the organization must allocate resources to prioritize and mitigate them.

Because AI systems are dynamic, the Manage function heavily emphasizes continuous monitoring.

- Incident Response for AI: Standard IT incident response plans often fail when applied to AI. If a model begins generating toxic content or leaking proprietary training data, the Manage function dictates pre-planned mitigation steps, such as rolling back to a previous model weight, initiating manual human-in-the-loop overrides, or triggering an automated “kill switch.”

- Feedback Loops: The framework requires mechanisms to gather real-world feedback from end-users and impacted communities, ensuring that the model’s performance in production aligns with the baselines established during the Measure phase.

Conclusion

The NIST AI RMF bridges the gap between high-level ethical principles and practical, boots-on-the-ground engineering. For IT professionals and technical managers, adopting this framework is no longer just a theoretical exercise; it is a strategic imperative. By implementing Govern, Map, Measure, and Manage, organizations can confidently deploy innovative AI solutions while demonstrating to auditors, regulators, and customers that they are actively securing the future of their software supply chain. For practical guidance on putting these principles into practice, see my article on the governance of AI-powered defense.