Secrets Management: Preventing AI from Hardcoding Keys

Learn how to prevent AI coding assistants from hardcoding secrets. Explore ML-driven secret scanning, pre-commit hooks, and dynamic vault integration.

Introduction: The Path of Least Resistance

Hardcoding secrets—API keys, database passwords, and cryptographic certificates (increasingly vulnerable to AI-driven password cracking)—directly into source code is one of the oldest and most dangerous sins in software development. Despite years of AppSec training, it remains the leading cause of massive data breaches.

With the introduction of AI coding assistants, this problem has accelerated. Large Language Models (LLMs) are trained to provide the fastest, most functional solution to a developer’s prompt. Often, the path of least resistance to make a script “just work” is to declare a global variable with a plaintext API key. If developers are not actively implementing robust secrets management architectures, AI assistants will gladly help them commit the digital keys to the kingdom straight to a public GitHub repository.

1. How AI Encourages Hardcoded Secrets

To understand how to stop AI from leaking secrets, we must understand why it happens in the first place. AI assistants learn from patterns in their training data. Unfortunately, open-source repositories are littered with tutorials, quick-start guides, and proofs-of-concept that heavily feature hardcoded placeholder credentials.

- The Placeholder Trap: When prompted to write a database connection string, an AI might generate

const dbPassword = "YOUR_PASSWORD_HERE";. A developer, rushing to test the code, replaces the string with the actual production password, fully intending to “fix it later.” The code works, they get distracted, and the secret gets committed. - Regurgitated Test Keys: Sometimes, the AI will generate code using actual, active API keys it ingested during training (a phenomenon known as data memorization). If a developer unknowingly uses this memorized key, their application might function temporarily but is tied to compromised infrastructure.

- Lack of Architectural Awareness: An AI coding assistant living in your IDE does not inherently know about your company’s HashiCorp Vault or AWS Secrets Manager implementation unless you explicitly prompt it to use those services.

2. ML-Enhanced Secret Scanning

The traditional defense against hardcoded secrets is a regex-based scanner running in the CI/CD pipeline. However, standard regex rules generate enormous amounts of false positives, flagging random test strings, commit hashes, or benign configuration variables.

Modern DevSecOps relies on Machine Learning-Enhanced Secret Scanning to solve this.

- Entropy and Context Analysis: Instead of just looking for the string “password,” ML models analyze the mathematical entropy (randomness) of the string and the surrounding syntactic context — much like how ML-enhanced SAST and DAST tools use context to reduce false positives. They understand the difference between a high-entropy 64-character AWS Access Key and a similarly long, but benign, base64-encoded image placeholder.

- Active Validation: The most advanced secret scanners don’t just flag the string; they autonomously ping the corresponding service provider’s API (e.g., Stripe, Slack, or GitHub) in the background to check if the discovered token is actually active and valid before alerting the developer.

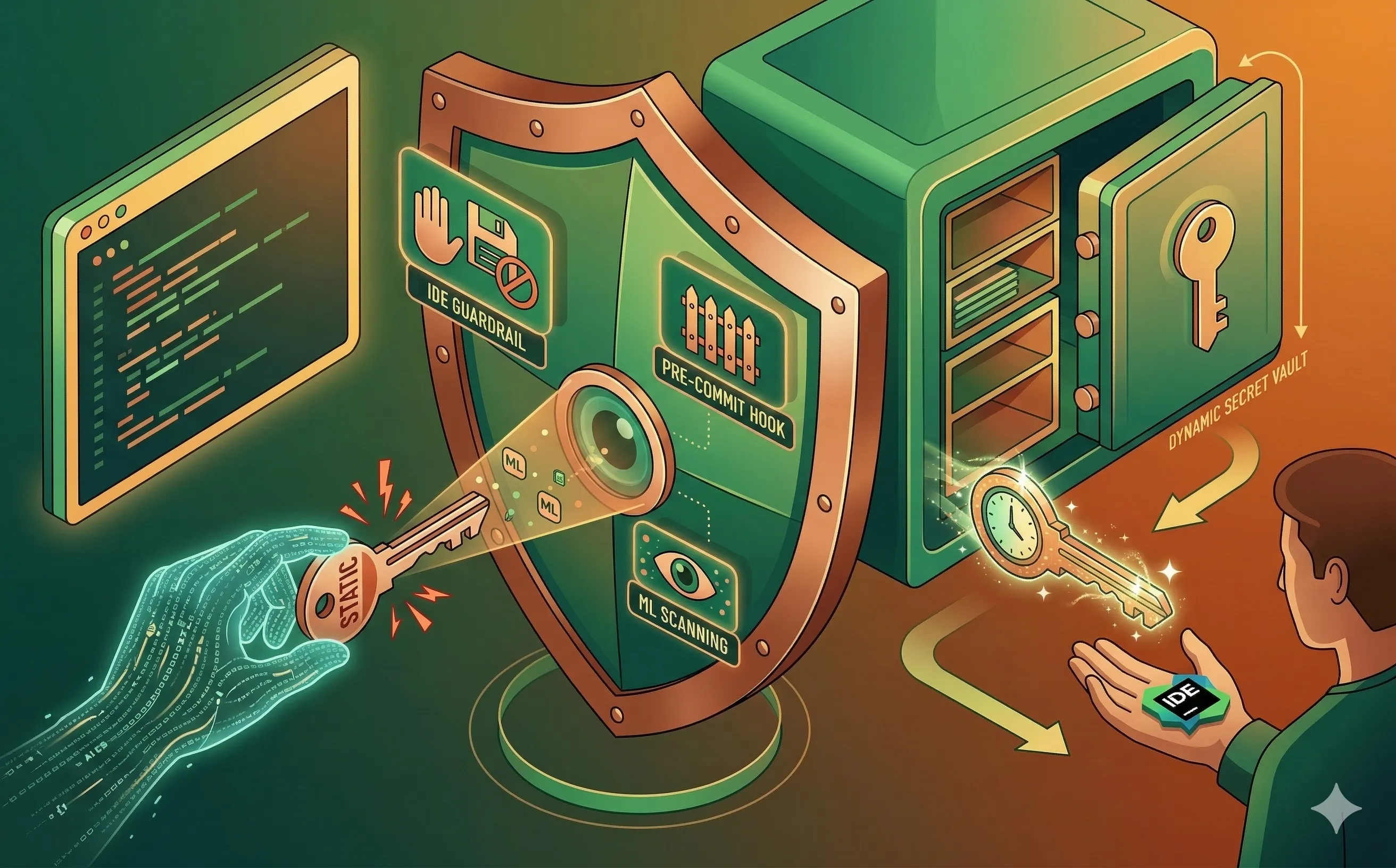

3. Shifting Left: IDE Guardrails and Pre-Commit Hooks

Finding a secret in a Git repository—even a private one—is often too late. Once a secret is in the commit history, it must be considered compromised and fully rotated, which causes operational downtime. The goal is to stop the secret from ever leaving the developer’s laptop.

- IDE-Integrated Scanners: Security teams must deploy lightweight secret scanning plugins directly into the developer’s IDE (VS Code, IntelliJ). If GitHub Copilot generates a block of code containing a suspicious token, or if the developer pastes one in, the IDE immediately highlights it in red, blocking the save action until the secret is removed.

- Pre-Commit Hooks: The ultimate failsafe is a Git pre-commit hook (like Talisman or GitGuardian’s

ggshield). When the developer typesgit commit, the hook runs a local ML scan over the staged changes. If a secret is detected, the commit is outright rejected, forcing the developer to refactor the code to use environment variables instead.

4. Designing for Dynamic Secrets

The most effective way to prevent AI from hardcoding secrets is to architect your application so that static secrets aren’t required in the first place. DevSecOps teams must guide developers (and their AI assistants) toward using Dynamic Secrets and Vaults.

- Prompt Engineering for Vaults: Developers should be trained to include secrets management instructions in their AI prompts. Instead of asking, “Write a Python script to connect to the AWS S3 bucket,” the prompt should be, “Write a Python script to connect to the AWS S3 bucket, fetching the temporary IAM credentials dynamically from HashiCorp Vault.” This approach also supports data sovereignty requirements by keeping credentials within controlled environments.

- Short-Lived Tokens: By integrating applications with dynamic secret managers, the application requests a database password that is generated on the fly and expires in 15 minutes. Even if an AI assistant somehow hallucinates or leaks this specific token during a debugging session, the blast radius is minimal because the credential self-destructs almost immediately.

Conclusion

AI tools optimize for speed, but security requires friction. Preventing AI from hardcoding keys requires a multi-layered approach: educating developers on secure prompt engineering, deploying ML-driven scanners in the IDE to catch mistakes in real-time, and architecting systems to rely on dynamic, short-lived credentials. By managing secrets properly, you ensure that your AI assistant is writing production-ready code, not writing a hacker’s next payload.