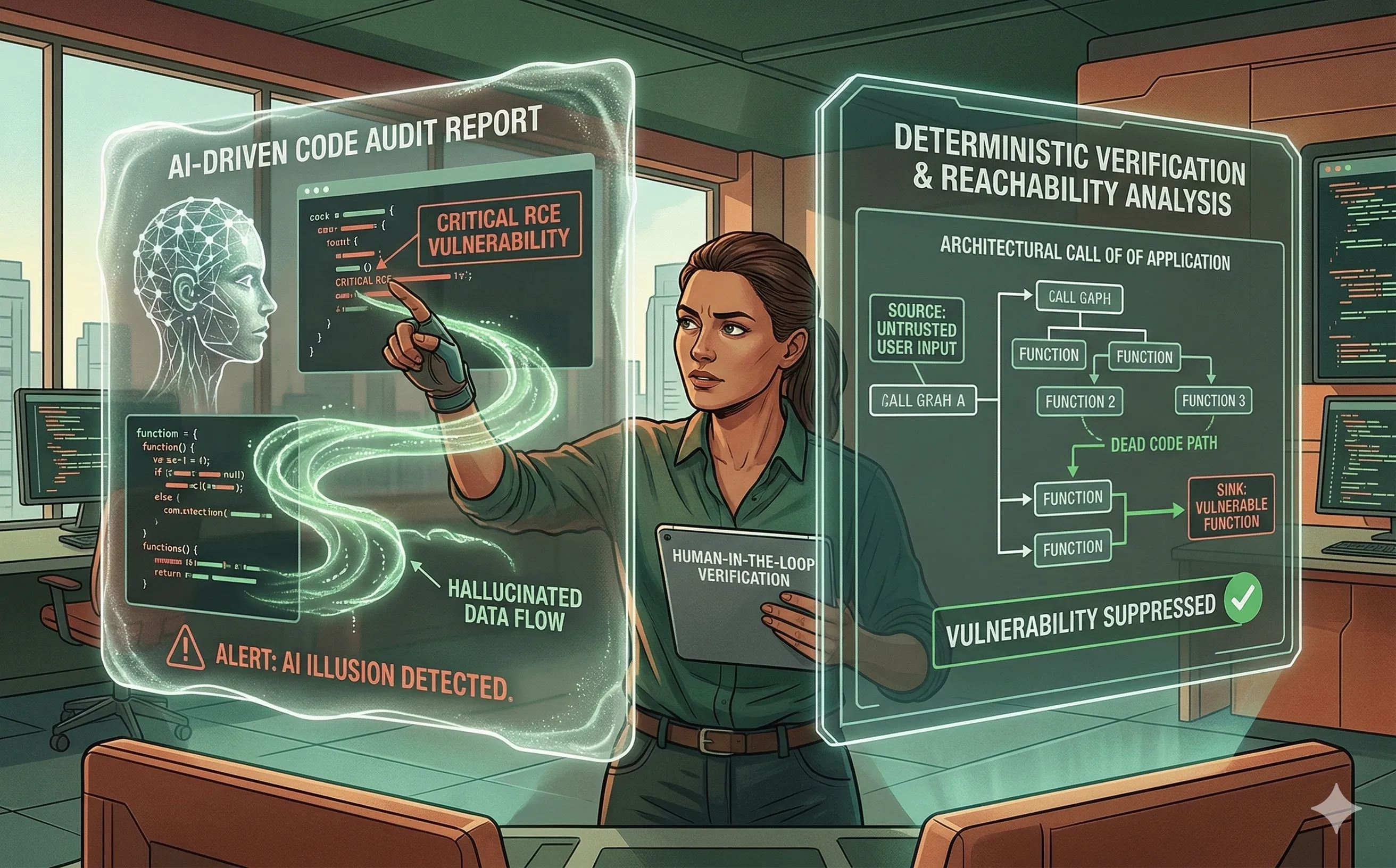

The Risk of "Hallucinated" Vulnerabilities: False Positives in Audits

Discover the hidden costs of AI-driven code audits. Learn why LLMs hallucinate vulnerabilities and how reachability analysis grounds AI in deterministic truth.

Nov 8, 20255 min read