The EU AI Act: Compliance Requirements for High-Risk Systems

Understand the EU AI Act's impact on enterprise IT. Learn the classification of high-risk AI systems and the mandatory compliance requirements for organizations ahead of the 2026 deadline.

Introduction: The GDPR of Artificial Intelligence

Welcome to the fourth and final series of our technology watch: Governance, Ethics & Future Trends. Over the past nine months, we have explored the tactical realities of AI in cybersecurity—from offensive prompt injection to defensive machine learning and DevSecOps. However, technology does not exist in a vacuum. For IT leaders and architects, deploying AI is no longer just a technical challenge; it is a profound legal and strategic liability.

The most significant regulatory shift in our industry since the GDPR is now underway: The European Union Artificial Intelligence Act (EU AI Act). Having officially entered into force in August 2024, the legislation features a phased rollout. While prohibitions on “unacceptable risk” systems are already active, the most critical deadline for enterprise IT teams is rapidly approaching: August 2, 2026. By this date, all “High-Risk” AI systems must fully comply with strict governance, transparency, and cybersecurity mandates.

1. The Risk-Based Approach and Classification

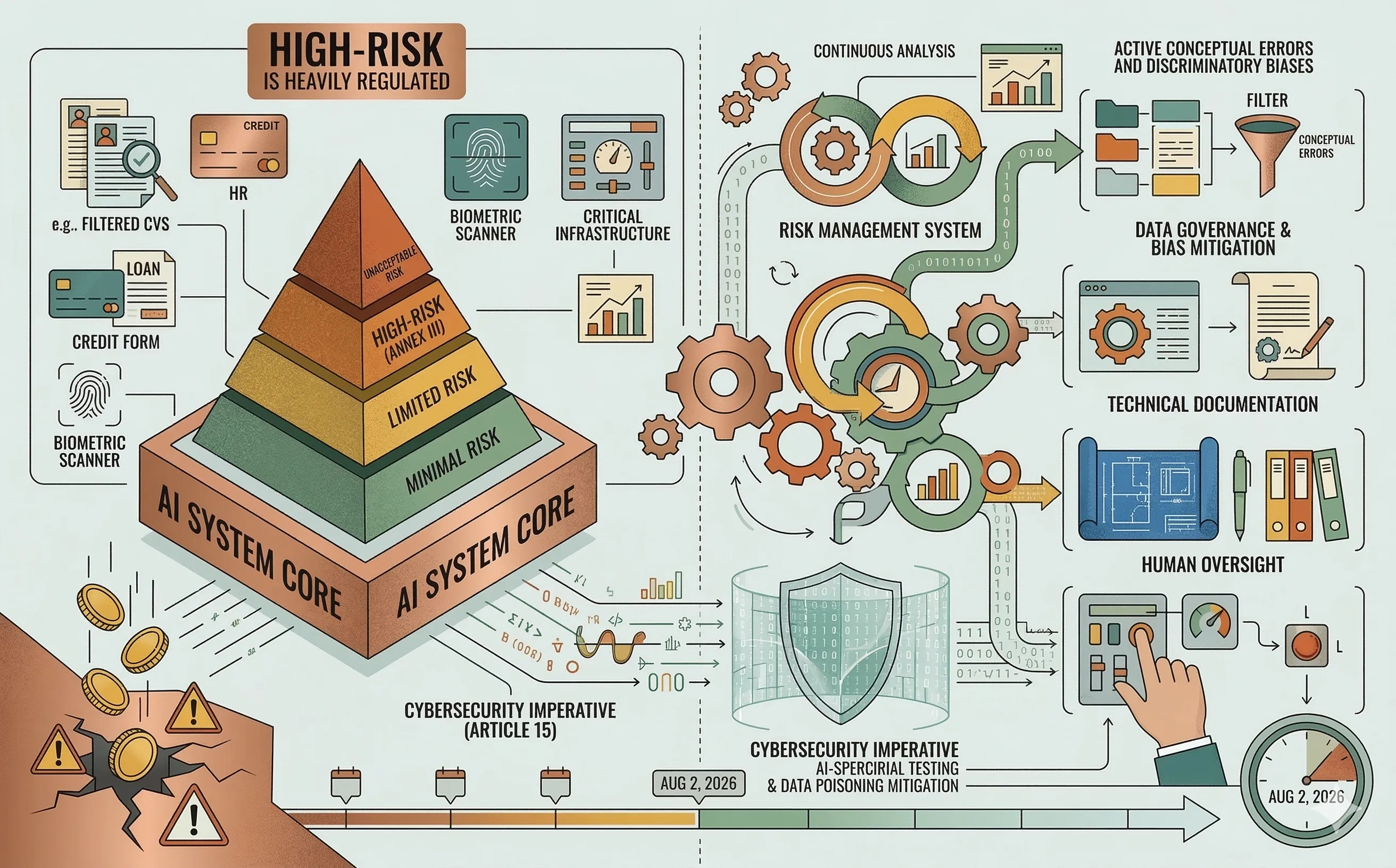

The EU AI Act does not regulate the underlying technology (like neural networks themselves); instead, it regulates the use case. It divides AI applications into a four-tier risk pyramid.

For enterprise organizations, the most crucial category to identify is High-Risk (Annex III). If your organization develops, deploys, or imports an AI system that falls into any of the following categories, you are heavily regulated:

- Employment and HR: AI used for recruiting, filtering CVs, monitoring employee productivity, or terminating contracts.

- Essential Private & Public Services: AI used for credit scoring (loan approvals) or determining life/health insurance premiums.

- Biometrics: Remote biometric identification and categorization systems.

- Critical Infrastructure: AI used as safety components in the management of digital infrastructure, water, gas, or electricity.

- Education: AI used to evaluate learning outcomes or determine admissions.

Note: AI systems embedded as safety components in physical products (like medical devices or vehicles) fall under Annex I and have a slightly longer compliance runway until August 2027.

2. Mandatory Compliance Requirements

If your system is classified as High-Risk, “moving fast and breaking things” is no longer legally permissible. Providers and deployers must implement a comprehensive compliance framework before the system can receive a CE marking and enter the EU market.

- Risk and Quality Management Systems: Organizations must establish a continuous, iterative risk management process throughout the entire lifecycle of the AI. This cannot be a one-time audit; it requires a permanent Quality Management System (QMS) to document how risks are identified and mitigated.

- Data Governance and Bias Mitigation: The Act explicitly mandates that the data sets used for training, validating, and testing must be relevant, representative, and, to the best extent possible, free of errors. You must prove that you have actively searched for and mitigated discriminatory biases in your training data.

- Technical Documentation and Record-Keeping: Black-box deployments are illegal for High-Risk systems. Providers must maintain exhaustive technical documentation detailing the model’s architecture, capabilities, and limitations. Furthermore, the system must automatically log events (traceability) so that authorities can investigate incidents if the AI makes a harmful decision.

- Human Oversight (Human-in-the-Loop): High-Risk systems must be designed in a way that allows natural persons to effectively oversee them. A human must be able to intervene, override the AI’s decision, or hit a “kill switch” if the system behaves unexpectedly.

3. The Cybersecurity Imperative (Article 15)

From a cybersecurity perspective, the EU AI Act elevates AppSec from a best practice to a legal mandate. Article 15 requires High-Risk AI systems to achieve appropriate levels of accuracy, robustness, and cybersecurity.

The legislation explicitly calls out AI-specific threat vectors. Organizations must prove that their High-Risk models are resilient against:

- Data Poisoning: Attempts by malicious actors to corrupt the training data.

- Adversarial Examples: Inputs designed to trick the model into making a false classification (e.g., bypassing biometric security).

- Model Flaws: Inconsistencies or faults within the operating environment.

For the cybersecurity team, this means traditional vulnerability scanning is no longer enough. You must implement AI-specific red-teaming and adversarial robustness testing to legally certify the system. For a deeper look at how to structure this within your organization, see my article on the governance of AI-powered defense.

4. The Cost of Non-Compliance

The financial penalties designed to enforce the EU AI Act are severe, surpassing even those of the GDPR.

- Prohibited Systems: Deploying an AI system with an “unacceptable risk” (e.g., social scoring or cognitive manipulation) carries fines of up to €35 million or 7% of total worldwide annual turnover, whichever is higher.

- High-Risk Non-Compliance: Failing to adhere to the strict obligations for High-Risk systems (e.g., skipping the conformity assessment or lacking human oversight) can result in fines of up to €15 million or 3% of total worldwide annual turnover.

- Misleading Authorities: Supplying incorrect or misleading information to regulators can trigger fines of up to €7.5 million or 1.5% of turnover.

(Note: The Act includes proportionate caps to ensure fines do not immediately bankrupt Startups and Small/Medium Enterprises).

Conclusion

The EU AI Act represents a fundamental shift in software engineering. As we move toward the August 2026 enforcement date for High-Risk systems, the role of the IT architect must evolve. We are no longer just building algorithms; we are engineering legally compliant, auditable, and transparent socio-technical systems. Compliance-by-design must be embedded into the very first sprint of any AI project.